Three impacts of machine intelligence

by paulfchristiano

I think that the development of human level AI in my lifetime is quite plausible; I would give it more than a 1-in-5 chance. In this post I want to briefly discuss what I see as the most important impacts of AI. I think these impacts are the heavy hitters by a solid margin; each of them seems like a big deal, and I think there is a big gap to #4.

- Growth will accelerate, probably very significantly. Growth rates will likely rise by at least an order of magnitude, and probably further, until we run into severe resource constraints. Just as the last 200 years have experienced more change than 10,000 BCE to 0 BCE, we are likely to see periods of 4 years in the future that experience more change than the last 200.

- Human wages will fall, probably very far. When humans work, they will probably be improving other humans’ lives (for example, in domains where we intrinsically value service by humans) rather than by contributing to overall economic productivity. The great majority of humans will probably not work. Hopefully humans will remain relatively rich in absolute terms.

- Human values won’t be the only thing shaping the future. Today humans trying to influence the future are the only goal-oriented process shaping the trajectory of society. Automating decision-making provides the most serious opportunity yet for that to change. It may be the case that machines make decisions in service of human interests, that machines share human values, or that machines have other worthwhile values. But it may also be that machines use their influence to push society in directions we find uninteresting or less valuable.

My guess is that the first two impacts are relatively likely, that there is unlikely to be a strong enough regulatory response to prevent them, and that their net effects on human welfare will be significant and positive. The third impact is more speculative, probably negative, more likely to be prevented by coordination (whether political regulation, coordination by researchers, or something else), and also I think more important on a long-run humanitarian perspective.

None of these changes are likely to occur in discrete jumps. Growth has been accelerating for a long time. Human wages have stayed high for most of history, but I expect them to begin to fall (probably unequally) long before everyone becomes unemployable. Today we can already see the potential for firms to control resources and make goal-oriented decisions in a way that no individual human would, and I expect this potential to increase continuously with increasing automation.

Most of this discussion not particularly new. The first two ideas feature prominently in Robin Hanson’s speculation about an economy of human emulations (alongside many other claims); many of the points below I picked up from Carl Shulman; most of them are much older. I’m writing this post here because I want to collect these thoughts in one place, and I want to facilitate discussions that separate these impacts from each other and analyze them in a more meaningful way.

Growth will accelerate

There are a number of reasons to suspect that automation will eventually lead to much faster growth. By “much faster growth” I mean growth, and especially intellectual progress, which is at least an order of magnitude faster than in the world of today.

I think that avoiding fast growth would involve solving an unprecedented coordination problem, and would involve large welfare losses for living people. I think this is very unlikely (compare to environmental issues today, which seem to have a lower bar for coordination, smaller welfare costs to avert, and clearer harms).

Automating tech progress leads to fast growth.

The stereotyped story goes: “If algorithms+hardware to accomplish X get 50% cheaper with each year of human effort, then they’ll also (eventually) get 50% cheaper with each year of AI effort. But then it will only take 6 months to get another 50% cheaper, 3 months to get another 50% cheaper, and by the end of the year the rate of progress will be infinite.”

In reality things are very unlikely to be so simple, but the basic conclusion seems quite plausible. It also lines up with the predictions of naive economic models, on which constant returns to scale (with fixed tech) + endogenously driven technology —> infinite returns in finite time.

Of course the story breaks down as you run into diminishing returns to intellectual effort, and once “cheap” and “fast” diverge. But based on what we know now it looks like this breakdown should only occur very far past human level (this could be the subject for a post of its own, but it looks like a pretty solid prediction). So my money would be on a period of fast progress which ends only once society looks unrecognizably different.

One complaint with this picture is that technology already facilitates more tech progress, so we should be seeing this process underway already. See [3] below.

Substituting capital for labor leads to fast growth.

Even if we hold fixed the level of technology, automating human labor would lead to a decoupling of economic growth from human reproduction. Society could instead grow at the rate at which robots can be used to produce more robots, which seems to be much higher than the rate at which the human population grows, until we run into resource constraints (which would be substantially reduced by a reduced dependence on the biosphere).

Extrapolating the historical record suggests fast growth.

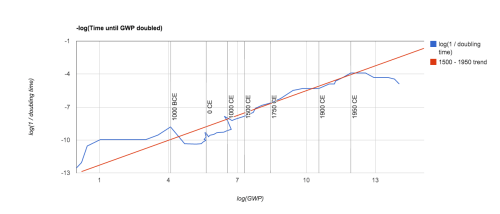

Over the course of history the proportional rate of growth has increased substantially, from more than 1,500 years per doubling around 10k years ago, to around 150 years per doubling in the 17th century, to around 15 years per doubling in the 20th century. A reasonable extrapolation of the pre-1950 data appears to suggest an asymptote with infinite population sometime in the 21st century. The last 50 years represent a notable departure from this trend, although history has seen previous periods of relative stagnation. (See the graph below, taken from Bradford Delong’s data here).

I don’t think that extrapolating this trend forward results in particularly robust or even meaningful predictions, but I do think it says one thing: we shouldn’t be surprised by a future with much faster growth. The knee jerk response of “that seems weird” is just not well-validated by the historical evidence in this case (though it is a very reasonable intuitive reaction for someone who lived through the 2nd half of the 20th century). I think that the more recent trend of slow growth may well continue, but I would also not be surprised if this stagnation was a temporary departure from trend, comparable to previous departures in severity and scale.

Wages will fall

I think this is the most robust of the three predictions. It seems very likely that eventually machines will be able to do all of the things that a human can do, as well as a human can do it. At that point, it would require significant coordination in order to prevent the broad adoption of machines as human replacements. Moreover, continuing to use human labor in this scenario seems socially undesirable; if we don’t have to work it seems crazy to make work for ourselves, and failing to make use of machine labor would involve even more significant sacrifices in welfare.

(There may still be demand for human labor in distinctively human roles, probably mostly by humans. And there may be other reasons for money to move around between humans. But overall most new valuable stuff and valuable ideas in the world will be produced by machines. In the optimistic scenario, the main reason that this value will be flowing to humans, rather than merely amongst humans, will be because of their role as holders of capital.)

Historically humans have not been displaced by automation. I think this provides some evidence that in the near term automation will not displace humans, but in the long run it looks inevitable, since eventually machines really will be better at everything. Simple theories do suggest a regime where humans and automation are complementary, followed by a regime in which they are substitutes (as horses were once complementary with carriages, but were eventually completely replaced by automation). Robin Hanson illustrates in slides 30-32 here. So at some point I think we should expect a more substantive transition. The most likely path today seems to be a fall in wages for many classes of workers while driving up wages for those who are still complementary with automation (a group that will shrink until it is eventually empty). “Humans need not apply” has been making the rounds recently; and despite many quibbles I think it does a good job of making the point.

I don’t have too much to say on this point. I should emphasize that this isn’t a prediction about what will happen soon, just about what will have happened by the time that AI can actually do everything humans can do. I’m not aware of many serious objections to this (less interesting) claim.

Human values won’t be the only things shaping the future

I’d like to explicitly flag this section as more speculative. I think AI opens up a new possibility, and that this is of particular interest for those concerned with the long-term trajectory of society. But unlike the other sections, this one rests on pretty speculative abstractions.

Many processes influence what the world will look like in 50 years. Most of those processes are not goal-directed, and push things in a somewhat random direction; an asteroid might hit earth and kill us all, the tectonic plates will shift, we’ll have more bottles in landfills because we’ll keep throwing bottles away, we’ll send some more radiation into space. One force stands out amongst all of these by systematically pushing in a particular direction: humans have desires for what the future looks like (amongst other desires), and they (sometimes) take actions to achieve desired outcomes. People want themselves and their children to be prosperous, and so they do whatever they think will achieve that. If people want a particular building to keep standing, they do whatever they think will keep it standing. As human capacities increase, these goal-oriented actions have tended to become more important compared to other processes, and I expect this trend to continue.

There are very few goal-directed forces shaping the future aside from human preferences. I think this is a great cause for optimism: if humans survive for the long run, I expect the world to look basically how we want it to look. As a human, I’m happy about that. I don’t mean “human preferences” in a narrow way; I think humans have a preference for a rich and diverse future, that we care about other life that exists or could have existed, and so on. But it’s easy to imagine processes pushing the world in directions that we don’t like, like the self-replicator that just wants to fill the universe with copies of itself.

We can see the feeble beginnings of competing forces in various human organizations. We can imagine an organization which pursues its goals for the future even if there are no humans who share those goals. We can imagine such an organization eventually shaping what other organizations and people exist, campaigning to change the law, developing new technologies, etc., to make a world better suited to achieving its goals. At the moment this process is relatively well contained. It would be surprising (though not unthinkable) to find ourselves in a future where 30% of resources were controlled by PepsiCo, fulfilling a corporate mission which was completely uninteresting to humans. Instead PepsiCo remains at the mercy of human interests, by design and by necessity of its structure. After all, PepsiCo is just a bunch of humans working together, legally bound to behave in the interest of some other humans.

As automation improves the situation may change. Enterprises might be autonomously managed, pursuing values which are instrumentally useful to society but which we find intrinsically worthless (e.g. PepsiCo can create value for society even if its goal is merely maximizing its own profits). Perhaps society would ensure that PepsiCo’s values are to maximize profit only insofar as such profit-maximization is in the interest of humans. But that sounds complicated, and I wouldn’t make a confident prediction one way or the other. (I’m talking about firms because its an example we can already see around us, but I don’t mean to be down on firms or to suggest that automation will be most important in the context of firms.)

At the same time, it becomes increasingly difficult for humans to directly control what happens in a world where nearly all productive work, including management, investment, and the design of new machines, is being done by machines. We can imagine a scenario in which humans continue to make all goal-oriented decisions about the management of PepsiCo but are assisted by an increasingly elaborate network of prosthetics and assistants. But I think human management becomes increasingly implausible as the size of the world grows (imagine a minority of 7 billion humans trying to manage the equivalent of 7 trillion knowledge workers; then imagine 70 trillion), and as machines’ abilities to plan and decide outstrip humans’ by a widening margin. In this world, the AI’s that are left to do their own thing outnumber and outperform those which remain under close management of humans.

Moreover, I think most people don’t much care about whether resources are held by agents who share their long-term values, or machines with relatively alien values, and won’t do very much to prevent the emergence of autonomous interests with alien values. On top of that, I think that machine intelligences can make a plausible case that they deserve equal moral standing, that machines will be able to argue persuasively along these lines, and that an increasingly cosmopolitan society will be hesitant about taking drastic anti-machine measures (whether to prevent machines from having “anti-social” values, or reclaiming resources or denying rights to machines with such values).

Again, it would be possible to imagine a regulatory response to avoid this outcome. In this case the welfare losses of regulation would be much smaller than in either of the last two, and on a certain moral view the costs of no regulation might be much larger. So in addition to resting on more speculative abstractions, I think that this consequence is the one most likely to be subverted by coordination. It’s also the one that I feel most strongly about. I look forward to a world where no humans have to work, and I’m excited to see a radical speedup in technological progress. But I would be sad to see a future where our descendants were maximizing some uninteresting values we happened to give them because they were easily specified and instrumentally useful at the time.

Thanks, this is a particularly clear framing.

Paul, cases where the division between humans and machines becomes problematic seem to be missing from this analysis. For example, I’m not sure how this analysis applies if our machines are Robin’s uploads, or if humans and machine intelligences become hard to separate (maybe in the direction Kurzweil is talking about). Discussion of these possibilities seems like it should inform the specific arguments you gave for drops in wages and human values not shaping the future so much.

If machines are Robin’s uploads, then they are likely to have goals which are more similar to the goals of the humans they are copied from. I imagine we could also build machines that pursue goals similar to their human owners, or machines whose only goal is to be helpful to human owners. It seems like points 1 & 2 are unchanged, and for point 3 we still fit somewhere on a complicated spectrum between “machine goals are just the same as human goals” and “machine goals are unrelated to human goals.”

If humans do productive work by interfacing with computers, then I’d say that we haven’t yet automated all of the useful things humans do, namely however they add value to that interaction. This seems like it fits into the same picture without modification, it’s just a closer form of collaboration. Alternatively, interfacing with computers more closely may be a way to stay in charge rather than contributing productive labor, which falls under a very broad range of responses to change #3. Do you think a more substantive revision is required?

Overall, it seems like #1 and #2 are more robust. Point #3 is more along the lines of “X becomes possible,” though there are many things we might do that would interfere with X actually happening, and many ways in which X could not happen even if we didn’t work to prevent it.

I think our disagreement was partly semantic in the case of uploads. I would have been inclined to describe a high-fidelity upload-dominated situation as “controlled by humans,” though there is a meaningful sense in which it isn’t (it’s not controlled by biological humans).

In the other case, it seems like there is a substantive disagreement. Let’s imagine a scenario in which Paul Christiano is slowly upgraded piece-by-piece into something that we could call a “machine intelligence.” Suppose various apps and modules are added little by little, but the motivational system remains the same, though various cognitive powers are greatly upgraded. The resultant entity is not biologically human. Some say the resulting thing is Paul (as I probably would), and some other people disagree. Now imagine that this happens with everyone, or almost everyone.

Now your latter two takeaways in the beginning seem perhaps strictly true (under the interpretation that biological humans count as humans), but misleading or less relevant if we’re more inclined to say that the humans became machine intelligences.

I think these cases are important (regardless of how likely they are); one reason I feel comfortable with the three effects I listed, and one reason that I chose this breakdown in particular, is that they seem to me to be very robust even in border cases like this one. Whether or not particular foreseeable border cases are important, I would guess that the future will have many characteristics that we don’t anticipate, and that useful analyses will need to be robust enough to apply anyway.

In the upload/upgrade scenarios I think we should distinguish two situations: in one the use of the human is essential. We can implement substantial improvements to human cognition, but cannot replace it wholesale. In the other human is superfluous. It is a matter of preference whether to use this process of upgrading or to simply start from scratch.

The former case is one in which we do not yet have broadly human-level AI, though we may have very superhuman systems composed of both humans and machines (as we arguably already have today). I am interested in the second scenario, which I think will probably come to pass eventually unless we simply stop working on AI.

In terms of values, it seems that there are many possible mechanisms by which you might ensure that machine intelligences share human values, or even the values of a particular human. Continuously deforming a human into a machine intelligence seems to fit in nicely into this overall space, as does making a machine intelligence which closely mirrors human intelligence in many or all respects. The effect of machine intelligence is introducing potential competitors rather than having eliminated human values from the picture. (You could imagine these enhanced humans working alongside machine intelligences which were completely alien, and our decisions together with tech constraints would determine the relative influences of the two groups.)

In terms of wages, I think we probably have a more definitional dispute. In the upgrading scenario, for example, human labor still becomes worthless. We can imagine a bunch of useful machine intelligences being produced continuously, one way or the other. A human can pay a cost (perhaps a very small one, if the upgrading process is the best way to produce useful machine intelligences–though in that case I suspect we don’t yet have broadly human-level AI) in order to ensure that one of these machine intelligences is somehow psychologically continuous with the original human. But the human isn’t going to get paid to do this, because this upgrading process isn’t actually creating anything useful that couldn’t have been created for free (for example by copying an existing upgraded human). The human without capital at this stage is completely destitute in an unprecedented way (for a healthy human).

In particular, note that if machine intelligences are in fact able to capture some surplus by working, then people would be willing to pay to influence the value of machine intelligences. In this scenario a human would not even be able to afford the resources necessary to be upgraded / keep themselves alive (because e.g. other people would be willing to subsidize their own upgrades and copies therefore in order to influence the values of the resulting machine intelligence). Perhaps if humans had already all been mostly-upgraded prior to the development of human-level AI this cost would be low. But in that case what’s going on is just that the humans own some capital, and there doesn’t seem to be a meaningful distinction from other scenarios in which humans can buy into the system because they own a bit of capital.

Of course you could imagine policy responses which could get around this, for example a small transfer to everyone. In the upgrading scenario this might take the form of allowing free access to the kinds of upgrades that would make someone economically competitive. But again, I don’t see how this is meaningfully different from other kinds of transfers, which people can then spend to slightly push the values of society in their preferred direction, or in particular to ensure their own continued existence.

For me the main interesting thing about the upgrading scenario is that the problem of designing machine intelligences may be solved by systems much smarter than existing humans. But in this respect it seems very analogous to other paths to human enhancement, via better training and methodology, better collective decision-making, biological interventions, or whatever else.

You seem to presume that outcomes are mainly determined by the desires of goal directed agents, weighted perhaps by their wealth. But in a world where no one is charge, competition can drive outcomes that individuals don’t particularly want. Such competition can even be the main drive that determines the goals of the agents.

Yes, this is my view. I agree that competition can change the course of the future, as can activities aimed at satisfying short-term interests, as can natural processes. They all seem to point in somewhat random directions, to be diminishing in importance, and to be unlikely to have very long-term effects besides extinction or value changes. I don’t think the latter is very plausible today, as I think biologically determined values of humans mostly dominate (an empirical claim on which I think we disagree) and are slow to change. But I do think that unintended values changes will become more plausible as AI becomes more important, which is the reason that impact #3 makes the list.

[…] Christiano, “Three impacts of machine intelligence.” […]

Assuming a world which is similar enough to ours that there’s still a large biological human population, and the concept of “wages” makes sense, it seems silly to analyze them without also analyzing the impact of AI on property rights. These vary hugely from country to country and time period to time period, and have consequently large effects on people’s lives. Eg. to name a few historical examples:

– slave societies have a large section of the population without property rights entirely;

– decentralized societies, like feudal Europe, have property rights only for those who are militarily powerful enough to defend them;

– Communist societies have no real property rights, and the government takes or gives you whatever it feels like;

– social democratic states have no property rights on about half your income (it’s taxed away), but strong protections for the other half, and you also have rights which are like “property” (guarantees of food, healthcare, housing, etc.) without being “private”;

– the US in 2014 has strong rights for money in your bank account (the government can’t take it without lots of complicated procedures), but weak rights for land you own (the government can tell you how you can and can’t use it in extremely fine detail);

and so on and so on. Imagining possible future scenarios – not claiming any of these are likely, just to explore the space some –

– no one really ‘owns’ anything, but no one cares, because governments will give you whatever you reasonably want on demand;

– everyone is ‘wealthy’ in some nominal sense, but it doesn’t matter much, because there are severe restrictions on what you can and can’t buy;

– everyone is ‘poor’ in some nominal sense, but it doesn’t matter much, because you spend all your time in VR where everything is free;

– a favorite dystopic one, where biological humans own everything and uploads can’t enforce their contracts, and so are forced to work for whatever humans will give them;

– and of course the counter-dystopia, where uploads will only honor cryptographic contracts biohumans can’t execute, and feel free to use their superior speed and numbers to steal humans’ stuff;

and on it goes, it’s easy to make new ones up and sci-fi authors have done so many times. To my knowledge, though, very little serious analysis has been done on this.

To make a more general point, I think it’s very easy to overstate how much ‘coordination’ is needed to achieve similar behavior when you have broadly similar culture (people talk to each other) and similar incentives. For an example where that *doesn’t* apply, there are about two hundred countries in the world, and most have militaries to defend against each other. Everyone would be better off if everyone reduced their military spending. However, this runs counter to culture (‘support the troops’) and incentives (‘we’d be defenseless’), so it doesn’t happen. Classic tragedy of the commons.

However, for another example, consider the San Francisco Bay Area. The Bay Area contains nine counties and 101 cities. Mostly, they are all legally independent, and state/federal law grants them wide latitude in how they conduct their affairs. Yet, each one has extremely similar policies for obtaining a building permit (you have to hold a public hearing, please the political leadership, meet requirements for environmentalism, parking, aesthetics and about a dozen other things, and none of the neighbors can object). There would in some sense be enormous economic benefits from defecting (more buildings = $$$ for lots of businesses), but those benefits don’t accrue to the decisionmakers and so nothing happens. (I’m not saying this scenario applies to AI economies, just trying to give an example to show things in this class are possible.)

Thanks for the post. 🙂

From an abstract perspective, do you see a fundamental difference between “the self-replicator that just wants to fill the universe with copies of itself” versus a future driven by human values? Both are equally arbitrary and have a similar tenor to them. Humans are also self-replicators trying to fill the universe with things they want (if not just exact copies of themselves).

One view (which I sort of agree with) is that we care more about human values (like reducing suffering) for the parochial reason that we are humans.

Another view is that human-driven futures might be more complex than replicator futures, although I do think replicators would build quite intricate societies of mind as well when trying to learn about the universe for instrumental reasons.

Also, if, as you suggest, we care about the human-level machines for their own sakes, they would seem to dominate the (short-run) welfare calculations. That the wages of the machines would fall might be much more important than the impact on humans — except that presumably the machines wouldn’t mind being slaves because of how they’re constructed (depending on whether they’re bottom-up AIs or uploads or some mix).

Finally, this post didn’t take a stand on the question of hard-vs.-soft takeoffs. My guess from the discussion is that you favor soft-takeoff scenarios?

It is difficult, sometimes, to separate human “values” so that we stop anthropomorphizing things. The fact is these intelligences will be vastly different from us. We need to stop having a discussion that stresses humanization of something that did not share our particular evolutionary past and recognize it for what it is: The alien product of our intellect.

We also need to stop discussing the human v. machine thing, too. This is a fruitless debate, one akin to using hands to push nails into wood or using a hammer. No one expects a hammer to look like a hand, so why should these devices hold anything at all in common with us?

These devices are products of our mind, designed to accomplish what we cannot. They should be different from us, in fact, as different from us as the problem requires. This difference does not mean an inability to establish common ground, hindering growth or preempting resource. This will be true as sentience arises.

This extends to the continued concept of capitalism as preeminent when everything clearly points to the need for an alternative form of economics. Capitalism might well be the founding engine that creates wealth but the distribution of that wealth must benefit ever human and not just some. It begins to form a civilization where individuals have the backing they need never to fail. Imagine what you might try if you knew you could not fail. And let’s not kid ourselves about the alternative: A future of jousting on the backs of motorcycles for a few cans of gasoline or Enfamil. There are 7.2B humans and the number is growing, as it should. We have an entire solar system out there waiting for us and a local cluster and galaxy beyond that. We will need every brain operation to its utmost. I worry there will not be enough of us to work on the real problems we will face in that stark reality. It really is about galactic expansion or extinction. This planet already has an impressive record of the latter.

So, like the hammer, we will merge with our technology. We already see this happening all around us. It will not be a competition but cooperation, not coopting but cooperation. The word of the day becomes the phrase of the day: Mutual growth. Why? Because the more we know, the more we understand the depth of what we do not know and phrases carry us further.

I think when we realize and provide for every human being we then can begin the real work of answering what we want, who we are, what it means to be human and where we go from here. New values will emerge and we will look back on what we thought were “human” values that now seem quaint.

The one thing that best summarizes the human condition is the need for change. Unlike other animals in their specialized bodies that forever traps them into ideas of bears, beavers or swans, our generic body required us to change how we think about ourselves, how we use the environment around us. We altered that environment to suit us – to fit better our ideal of what we thought it should be like to make our own lives easier.

This emerging technology is similar – a new way to create a shell about us, a longer, different stick to poke about in the dark. The fact that we can make it as intelligent or more intelligent than us speaks to our loneliness and our need for a friend. It also speaks to our boredom. It speaks to our need to implement change faster. And maybe that is the thing that best defines humanity: The need for change.

The rest is just plain bullshit.

[…] published several additional analyses closely related to MIRI’s Friendly AI interests: “Three impacts of machine intelligence,” “Approval-seeking [agents],” “Straightforward vs. goal-oriented […]

What scenario are you imagining where the “net effects on human welfare” of plummeting wages “will be significant and positive”? I was under the impression that low/nonexistent income correlated strongly with all sorts of deleterious effects on well being

1. wages are not the same as income

2. if labor is free, then everyone can have as much of it as they want (well, all of the flesh and blood people can have as much of it as they want)

3. I am very dubious about extrapolating an observed correlation between lack of work and unhappiness. Being fantastically wealthy, and generally living in a world where massive amounts of energy are expended on making peoples’ lives good, just seems like a more important and robust driver of well-being than having a job. I think there are a number of more plausible explanations for the observed correlations (many of which would not apply in a world where no one needed to work).

4. if people really need work to be happy, then someone more resourceful can make it for them

5. many people already spend some or all of their time playing sports, playing competitive games, making art, or working on non-productive hobbies, none of which contribute meaningfully to productive activity. These people don’t seem to have systematically unhappy lives. The vast majority of people claim to enjoy these activities more than work, and many people who do not need to work focus their time on these activities.